Disasters come in forms that affect your critical workloads and business. It can be in the form of a natural disaster like flooding, fires, or earthquakes. Or can be in a technological disaster, such as power failure, cyber attacks, and data breaches. The disaster recovery scenarios can include human-caused errors such as explosions, terrorist attacks, or huge production errors. Disaster can occur at any time and in any form. They are often unavoidable. The key is always to be prepared with a recovery plan. No matter what disaster strikes, a disaster recovery plan can help you protect your data, restore your IT infrastructure, and recover your business operations. Disaster recovery in Google Kubernetes Engine (GKE) involves implementing strategies and techniques to ensure business continuity in case of system failures, natural disasters, or other disruptive events.

Significant infrastructure downtime can have a devastating impact on an enterprise business. This impact is not just monetary. In many cases, the impact on a business’s reputation can be even more catastrophic and take longer to recover from, if ever. As a result, enterprises are increasingly mandating the implementation of robust backup and disaster recovery solutions to provide them with insurance against the unexpected. This helps to avoid costly downtime, lost productivity, and even business failure.

Backup and disaster recovery by Google Cloud

By implementing backup and disaster recovery in Google Cloud, you can help protect your critical cloud workflows from a variety of threats. You can ensure that your business continues to operate even in the event of a disaster. This can give you peace of mind and help you to focus on running your business. Every workload is unique. Google Cloud offers a variety of protection strategies to choose from, depending on your business and industry. It enables on-demand resource allocation for unprepared events to minimize the cost of support and help you focus on backing up your critical workloads.

Designing Disaster Recovery Strategies

- Identify critical functions: Get a list of the most important things your company does. These are the functions needed to recover quickly during a disaster.

- Define Recovery Time Objective (RTO) and Recovery Point Objective (RPO): RTO is the goal your organization sets for the maximum time taken to restore normal operations following an outage or data loss. RPO is your goal for the maximum amount of data the organization can tolerate losing. You need to determine how much data you need to protect and how quickly you need to recover when a disaster happens. The lower the RTO and RPO requirements, the disaster recovery gets higher.

- Assess your Risks: Assess the types of disasters most likely to impact your organization. Once you know your risks, you can start developing strategies to migrate them. Your recovery plan should include steps for recovering critical business functions. It should also include a timeline for recovery and a budget.

- Test the plan: Review your recovery plan regularly, run tabletop exercises, and simulate disaster scenarios to make sure your disaster recovery plan is effective and up to date. Consider the additional cost and capacity needed for running these exercises. Ensure your organization’s key stockholders know their roles and understand your disaster recovery plan. While planning for disaster recovery, be flexible. No two disasters are the same. Your disaster recovery plan should be flexible enough to adapt to different situations.

High-level approach of GKE on disaster recovery

Different components in GKE for disaster recovery

- Node Auto Repair: Repairs unhealthy nodes in a GKE cluster. When enabled, GKE will periodically check the health of each node in the cluster. If a node fails consecutive health checks over an extended period GKE will initiate a repair process for that node. The repair process for an unhealthy node may involve one or more following steps.

Step 1. GKE will start draining the node by stopping scheduling new pods on the nodes.

Step 2. Then, replace the node and migrate pods from the unhealthy node to the new node.

Step 3. GKE will scale the cluster by creating additional nodes to compensate for the loss of the unhealthy nodes.

This will keep your cluster healthy, reduce manual intervention, improve availability, and reduce costs. - Liveness Probe: GKE allows you to specify a liveness check that will run periodically to ensure your pod is running successfully. This mechanism ensures that a container is running and healthy. When you configure the Liveness Probe in your pod spec, kubelet checks the health of the pod every 5 seconds. If the pod fails the liveness probe three times in a row, in a row, it will be restarted.

- Persistent Volume: GKE safeguards storage availability by mapping to persistent volume abstraction. Persistent volumes are a Kubernetes resource that provides a way to store data that persists even if the pods that use the data are deleted. They are backed by physical storage, such as Google Compute Engine persistent disks. This can be useful for storing data that needs to be available even if a pot fails or is restarted. The multi-cluster gateway supports internal and external load balancing, weight-based traffic, splitting traffic capacity-based load balancing, and traffic mirroring between your clusters. Multi-Cluster ingress lets you configure shared load balancing of resources across multiple GKE clusters in different regions. It improves application availability, reduces operational complexity, increases scalability, and provides a single entry point for users to access applications and manage traffic across multiple clusters.

Preparing for high-availability

Spreading the Kubernetes control plane and its nodes across different zones or regions for the workloads is very important to achieve high availability.

- Choose to deploy your Kubernetes workload in a regional or a zonal cluster.

- Choose multi-zonal or single-zone node pools. Within zonal, GKE offers two types of node pools: single-zone and multi-zonal clusters.

Single-zone clusters have one control plane machine and worker nodes in the same zone. Multi-zonal clusters are similar to zonal clusters. But they span nodes across multiple zones. A regional or multi-zonal cluster will provide a highly available cluster. Regional clusters are better suited for high availability, as they have multiple control planes across multiple compute zones in a region. In contrast, zonal clusters have one control plane in a single compute zone. However, if cost is a factor or your workload is not as critical, a multi-zonal cluster could be a better choice. In regional clusters, the control plane remains available during cluster maintenance, like rotating IPs, upgrading control plane VMs, or resizing clusters or node pools. When upgrading the regional cluster, two out of three control plane VMs run during the rolling upgrade. So, the Kubernetes API is still available. Similarly, a single-zone outage won’t cause downtime in the regional control plane.

Backup for GKE

Data protection for Kubernetes workloads

Backup for GKE is a fully managed service that helps you protect, manage, and restore your Kubernetes workloads and data in a simple, scalable, and secure way. GKE provides integrated backup for stateful workloads. Backup for GKE is a simple way to protect, manage, and restore your containerized applications and data. With backup for GKE, you can meet your service level objectives and automate backup and recovery tasks. You can protect Kubernetes resources including namespaced resources, cluster-wide resources and persistent volumes, application data, databases, file systems, and logs. You can seamlessly restore your backups to a new or existing Kubernetes cluster.

Backup Options

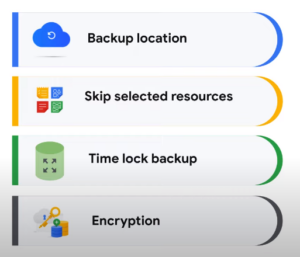

Backup for GKE is configurable, flexible, and easier to adopt for enterprises. The backup option allows you to select preferred backup destinations or select and skip resources. You can configure backup not to include secrets so the data is not visible via the persistent disk control plane. By enabling time lock backups, you can disable manually or automated deletion of backups to protect from malicious attacks. All data is encrypted by default with the option of using customer-managed encryption keys, CMEC.

Restore Options

The restore option lets you store a cluster in a new cluster or a region. And the flexibility of parameterizing the restore option to different storage classes. The sub-scope feature lets you to restore a specific namespace or application if it is deleted or the upgrade fails. By delegating an admin, cluster admins can give access to app admins to do ad hoc backups before critical application upgrades.

How does GKE Backup work

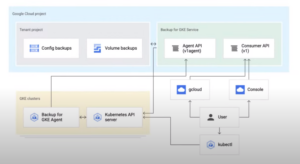

The diagram below shows the relationship between different components for backup in GKE.

The resource-based REST API-based service serves as the control plane for backup for GKE and includes Google Cloud Console UI elements that interact with the API. The agents run in every cluster where backups or restores are performed and perform backup and restore operations by interacting with the backup for GKE API.

Conclusion

A tailored disaster recovery plan is important to your application’s requirements and business needs. GKE provides various tools and features to assist with disaster recovery, but it requires careful planning and design to ensure a robust and reliable recovery strategy.

Metclouds Technologies helps you to maintain the availability of containerized applications.