Recently, we had to setup Varnish load balancer for a website having 1 Million visitors per day. Provisioning load balancer based on this scale is a challenging task. We need to do several benchmarking tests in order to reach our optimum solution.

Let us see how we did the load balancer setup for supporting 1 Million visitors.

Resource Used

Front-end Server

1 Front-end Proxy server configured with Nginx + Varnish 3.0

Specification: 30 GB RAM, 16 CPU Core, 50 GB SSD

Amazon EC2: c4.4xlarge

Backend Server

Backend server 01 Webserver (Windows)

Backend server 02 Webserver (Windows)

Specification: 122 GB RAM, 16 CPU Core, 200 GB SSD

Amazon EC2: r4.4xlarge

Database Server

Amazon RDS with Multi-AZ Deployments

Architecture

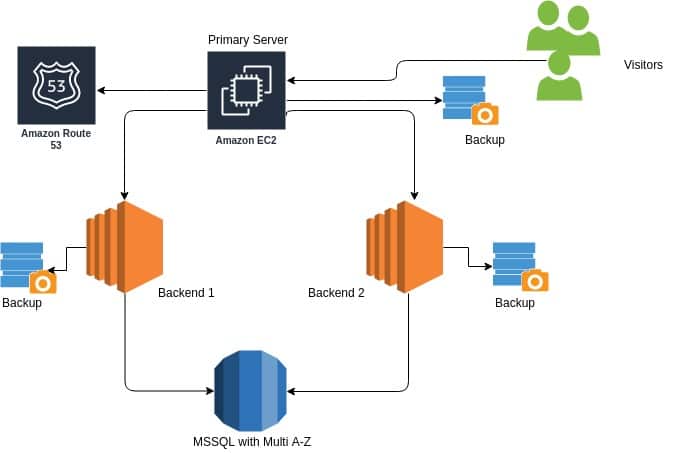

In this architecture, the primary proxy server is configured with Nginx + Varnish 3.0. For DNS management, Amazon Route 53 is used. The domain must point to the primary proxy server.

In Varnish configuration, backend servers are specified and the load balancer is configured in round-robin using varnish director. So the request will serve as round-robin manner, The CPU usage is balanced.

All the static contents are cached on the varnish server and Grace Mode is enabled for 6 hours which reduce the CPU overhead.

We have two backend servers to serve website contents. The database server is set up on Amazon RDS with Multi-AZ replication. The Multi-AZ setup acts as failover – new DB instance will immediately act as the primary, if the primary RDS instance fails or crash.

Varnish Load Balancer Configuration

This is a sample configuration with a varnish load balancer. Here two backends are configured. If your backend server’s resource capacity is low, you can add more backends like web1, web2, web3, web4 etc.

Configuration File : /etc/varnish/live.vcl

Get the code from https://raw.githubusercontent.com/xieles/varnish/master/live.vcl

For backups, AWS snapshots and RDS DB snapshots are enabled with the retention of one hour.

Several benchmarking tests were done using Apache JMeter and finally concluded that the above architecture was able to handle up to 1 million requests. The response is 10 times faster when compared to normal web servers because all the static contents are cached using varnish and remaining contents like cart and signup forms will be served from the backend server.

Xieles Support can help you with speeding up of your website. Get a quote from us if you need any assistance.

STILL SPENDING TIME ON SUPPORT?

Outsource your helpdesk support to save time and money. We have technicians available for little over 5 USD per hour in order to assist your customers with their technical questions. Grow your business and concentrate more on your SALES!

Xieles Support is a provider of reliable and affordable internet services, consisting of Outsourced 24×7 Technical Support, Remote Server Administration, Server Security, Linux Server Management, Windows Server Management and Helpdesk Management to Web Hosting companies, Data centers and ISPs around the world. We are experts in Linux and Windows Server Administration, Advanced Server Security, Server Security Hardening. Our primary focus is on absolute client satisfaction through sustainable pricing, proactively managed services, investment in hosting infrastructure and renowned customer support.